Editor's Note: The following is a guest post from Nick Psaki, principal system engineer at Pure Storage

We’ve been talking about data growth for the past two decades, but data is accelerating like never before. In fact, 90% of the world’s data was created in the past two years alone. One of the biggest changes is that most of the data being created today is generated by machines, not by humans using applications.

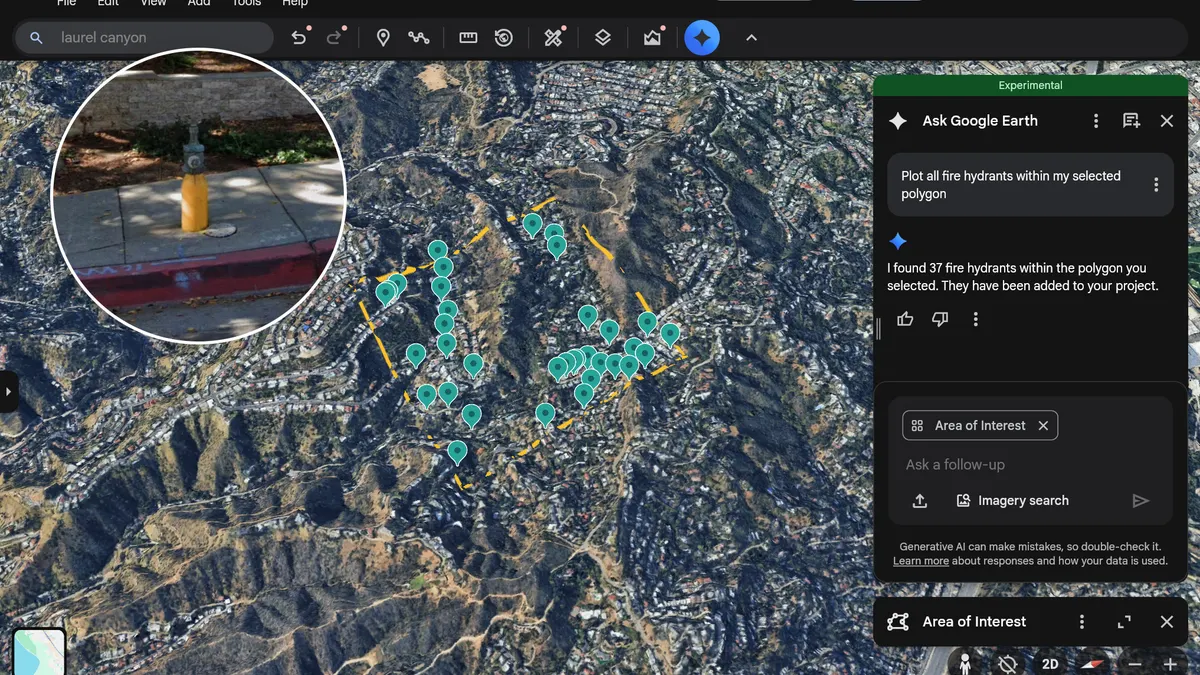

Take internet of things (IoT) sensors, used to monitor everything from traffic patterns and parking to air quality and infrastructure integrity. These sensors are generating mass amounts of data, but are cities set up to process it? This data is only useful if it triggers automatic workflows and information is disseminated in real time — and for that, IT infrastructures need to have the right building blocks.

As governments are faced with delivering more services with fewer resources, residents are expecting their governments to deliver services like they are used to in their lives as consumers. In order for governments to do this, they must be able to operate efficiently and analyze data where it is created. Data powers emerging technologies such as artificial intelligence (AI) and deep learning, and it is bringing things to the next level. Additionally, it allows governments to automate infrastructure, which in turn enables greater efficiency — allowing them to improve citizen service.

While the volume of unstructured data such as geospatial and social has exploded, legacy storage built to house that data has not fundamentally changed in decades. Deep learning, graphics processing units (GPUs) and the ability to store and process very large datasets at high speed are fundamental for AI. Deep learning and GPUs are massively parallel, but legacy storage technologies were not designed for these workloads — they were designed in an era with an entirely different set of expectations around speed, capacity and density requirements.

Today’s infrastructure wasn’t built from scratch to implement a cohesive data strategy — it was built over time, probably project by project and is likely fragmented and not very agile. Is it time to consider if cities are ready for a storage transformation?

The short answer: They have to be, and the focus must be around creating an infrastructure that is designed with data in mind. In order to be prepared for next-generation governing, cities must focus on IT infrastructure from the bottom up, consider data a factor when making key decisions and focus on three key principles.

Consolidate & simplify

To drive efficiency and achieve the potential of data, it is essential for cities to consolidate and move away from data islands. They must focus on consolidating applications into large storage pools, where what used to be tiers of storage can all be simplified into one. This drives efficiency, agility and security — which all play a role in a successful next-generation government.

Process in real-time

Data must be processed in real-time because it makes applications faster, time to action more instant and immersive, employees more productive and citizens safer and more satisfied.

Embrace on demand & self-driving storage

On demand and self-driving storage represents a paradigm shift in terms of how we think about operating storage. What if a storage team could stop being a storage operations team, and instead think about their mission as running an in-house storage service provider? What if they could deliver data-as-a-service, on demand, to each of the development teams, just like those developers could get from the public cloud? Instead of the endless cycle of reactive troubleshooting, what if the storage team could spend its time automating and orchestrating the infrastructure to make it self-driving and ultra-agile? With the pressure for government to run more like a business, there must be a big shift towards efficiency.

A data-centric architecture will simplify performance of core applications while reducing cost of IT; empower developers with on-demand data, making builds faster; and enable the agility required for DevOps and the continuous integration/continuous delivery (CD) pipeline. It will deliver next-gen analytics as well as act as a data hub for the modern data pipeline, including powering AI initiatives.

In short, a data-centric architecture will give cities the platform they need to stay ahead of these impactful emerging technologies. It’s not about how much data cities have – it’s about what they can do with it. And those impactful and meaningful projects start with data at the center of the IT infrastructure strategy.